How AI is making us confidently ignorant

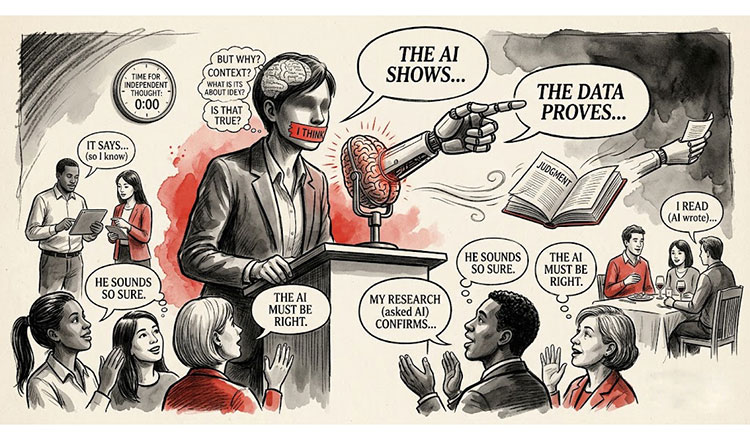

To prevent a future peopled by confident but shallow thinkers, we must treat AI as a starting point rather than a conclusion. Khmer Times/AI generated

To prevent a future peopled by confident but shallow thinkers, we must treat AI as a starting point rather than a conclusion. Khmer Times/AI generated

#opinion

There is a particular kind of dangerous person in every meeting room today. They speak with authority. They cite figures. They have answers. But they have not really thought about any of it. They asked an AI.

This is not an argument against artificial intelligence or the people who use it. It is about what happens when the tool begins to do the thinking for us. It is about a quiet crisis spreading through offices, classrooms, and dinner tables: the rise of the confidently ignorant.

We’ve seen this phenomenon before. The internet gave us the “Google doctor,” someone who spends 20 minutes searching symptoms online and arrives at the clinic convinced they have a rare disease. The limitation was obvious. They possessed information but lacked the judgment needed to interpret it. AI has made this person even more persuasive. When you ask an AI a question, it provides you with a concise, confident paragraph devoid of contradictions. It sounds exactly like an expert. The problem is that the answer might be wrong, incomplete, or addressing the wrong question entirely. Yet nothing in the tone signals uncertainty, so the user rarely questions it.

Consider what is happening in universities. Students can now submit polished essays on topics they’ve never genuinely engaged with. The AI has done the heavy lifting. But the struggle of writing an essay is not a punishment; it is learning. Sitting with a blank page forces a person to test ideas, organise evidence, and discover where their reasoning fails. Remove that friction and you remove the formation of judgment.

In the workplace, this is already reshaping how decisions get made, and badly. A colleague recently described a senior manager who relied on AI to produce market analyses. The outputs were impressive, structured, and detailed. What was missing was the experience to know the framing was wrong from the start, that the AI had confidently answered a different question than the one the business actually needed answered. The manager never developed the instinct to catch that, because developing instinct requires years of being wrong and understanding why.

There is a concept in psychology called “calibration” which refers to the alignment between confidence and actual accuracy. A well calibrated person might say, “I am about 60% sure,” and over time they are correct roughly 60% of the time. Their confidence matches the limits of their knowledge.

AI produces uncalibrated confidence in its users. Because the tool sounds authoritative, users absorb that authority. They stop saying “I think” and start saying “The research shows.” They stop hedging and start asserting. They lose the very self-awareness that separates genuine expertise from its impersonation.

Real experts are distinguished not primarily by what they know, but by their precise awareness of what they don’t know. A seasoned doctor, lawyer, or engineer will tell you the limits of their certainty. That awareness is hard-won. It comes from years of encountering the unexpected, of being humbled by complexity.

None of this means we should abandon these tools. It means we should use them with the same caution we would apply to any powerful shortcut.

A calculator does not make you a mathematician. A GPS does not make you a navigator. And an AI that writes your analysis does not make you an analyst. The shortcut has its place, but it cannot replace the long road that builds judgment.

We now live in a world where more answers are instantly available than at any time in human history. The risk is that easy answers may slowly erode our ability to ask better questions.

If we want to avoid a future full of confident but shallow thinking, we should treat AI as a starting point rather than a conclusion. Encourage people to explain how they reached their answer. Ask what assumptions the tool made. Require independent reasoning before accepting a generated response. Most importantly, preserve the slow and sometimes uncomfortable process of thinking things through.

Convenience should help us think better, not replace the thinking altogether.

Dr Heng Pheakdey is an indepenent policy analyst and digital strategist.

-Khmer Times-